Two posters at NeurIPS

- 12 December 2019

- Autonomous Learning

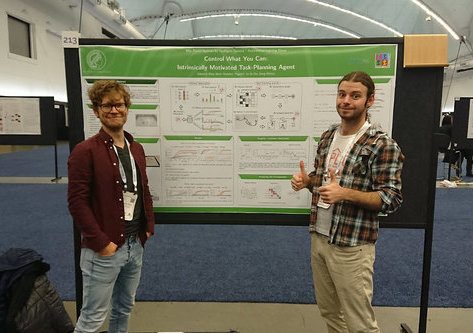

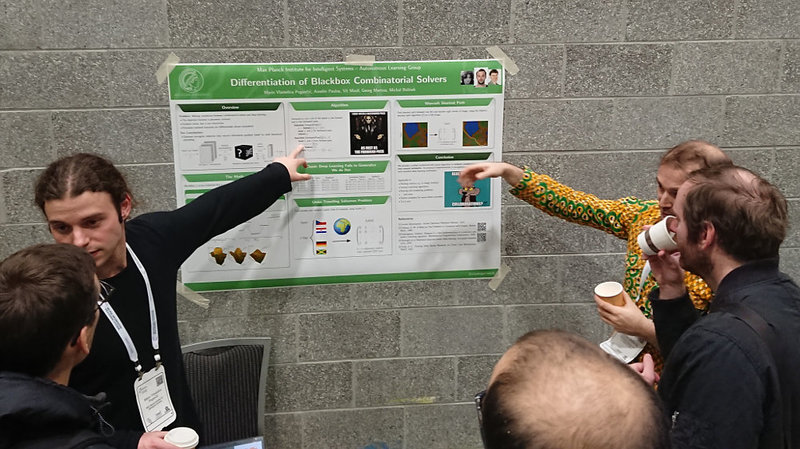

We presented two posters at NeurIPS: "Control What You Can" and "Differentiation of Blackbox Combinatorial Solvers"

It was a great pleasure to present two recent works from our lab at NeurIPS 2019 in Vancouver:

Control What You Can: Intrinsically Motivated Task-Planning Agent:

We present a novel intrinsically motivated agent that learns how to control the environment in the fastest possible manner by optimizing learning progress. It learns what can be controlled, how to allocate time and attention, and the relations between objects using surprise based motivation. The effectiveness of our method is demonstrated in a synthetic as well as a robotic manipulation environment yielding considerably improved performance and smaller sample complexity. In a nutshell, our work combines several task-level planning agent structures (backtracking search on task graph, probabilistic road-maps, allocation of search efforts) with intrinsic motivation to achieve learning from scratch.

Differentiation of Blackbox Combinatorial Solvers (later published at ICLR):

Achieving fusion of deep learning with combinatorial algorithms promises trans-formative changes to artificial intelligence. One possible approach is to introducecombinatorial building blocks into neural networks. Such end-to-end architec-tures have the potential to tackle combinatorial problems on raw input data suchas ensuring global consistency in multi-object tracking or route planning on mapsin robotics. In this work, we present a method that implements an efficient back-ward pass through blackbox implementations of combinatorial solvers with linearobjective functions. We provide both theoretical and experimental backing. Inparticular, we incorporate the Gurobi MIP solver, Blossom V algorithm, and Di-jkstra’s algorithm into architectures that extract suitable features from raw inputsfor the traveling salesman problem, the min-cost perfect matching problem andthe shortest path problem

NeurIPS